Passwords are the primary mode of authentication, yet rarely studied or monitored because of their sensitive nature. In our paper, we introduced a framework called Gossamer for securely measuring password-based logins [1] and a simulation method for measuring risk of individual password-derived measurements. We then deployed Gossamer at two large universities, monitored 34 million login requests over several months, and reported on the resulting data.

Although password-based authentication is still widely used across the Web, little is known about the types of passwords submitted by users or attackers to a login system. This is primarily because passwords are very sensitive; submitted passwords that result in login failure are also risky to monitor, as they could be users’ real passwords on other web services or a typo of their real password. Industry practitioners have largely shied away from investigating passwords themselves because of the high risk, with a few exceptions [2, 3]. However, certain questions can only be answered through analyzing submitted passwords as observed by a login system. For example, how often is a mistyped variant of the actual user password submitted against a user account? How often are breached passwords submitted? How often is the submitted password a close variant of a breached password? And more broadly, can we use the submitted passwords, especially the ones that are not correct, to differentiate legitimate users from attackers? These insights can help us understand user behavior, inform policy, and develop countermeasures to attacks on password-based login systems.

To answer these questions, however, we need (a) a framework to log submitted passwords in a secure manner that does not undermine the safety of passwords, and (b) a partnering login system with a large active user base. Creating a simulated login environment—such as using Amazon Mechanical Turk (AMT), as used in many previous password studies—would miss attacker behavior, and users may behave differently when they know they’re being monitored. In our paper, we introduce a framework called Gossamer [1] for measuring password-derived information in live login systems. Here, we explain more about the process behind designing this tool.

We presented the research idea to the IT departments at two large universities who run web login systems with more than 500k users combined. Fortunately, both IT departments were excited about the idea and willing to collaborate with us to design a system that could safely perform measurements on passwords without compromising the performance or security of the university web login systems. We iterated over several different designs over several months trying to satisfy the IRB policies while meeting the operational constraints of the IT departments’ infrastructures. For example, to avoid interacting with any identifiable information, we wanted to only store a keyed hash of the usernames associated with login requests, and delete the key after data collection is over; however, this would not allow us to report compromised users that we discover through our instrumentation to the IT departments. On the other hand, using standard encryption to protect usernames would not allow learning if two login requests contain the same username, preventing us from aggregating login requests by usernames for downstream analysis. Thus we decided to use a deterministic encryption algorithm to protect the privacy of usernames with an encryption key held by the relevant IT Security office.

We finally created a set of four design principles to follow, and throughout the design process, we made infrastructural and design decisions based on these principles. These principles are as follows:

1. Least privilege access to password data

We wanted to ensure that the researchers and engineers involved receive only information necessary for maintaining the service and analyzing login behaviors.

2. Periodic deletion

To further minimize risk in the unlikely event of a complete system compromise, all sensitive data older than 24 hours must be expunged.

3. Safe-on-reboot

All sensitive data should be destroyed on reboot. This principle was introduced in Bunker by Miklas et al [4].

4. Bounded-leakage logging

Since passwords are very sensitive, any password-derived measurements logged must be carefully chosen to bound the improvement in a potential password guessing attack.

The fourth design principle required us to consider how we can measure the potential risk of storing password-derived measurements should they be compromised by an attacker. For example, say that an attacker has a list of passwords, and they are executing an optimal frequency-based attack against a user account based on their knowledge of the password distribution. The attacker would start by guessing the password with the highest frequency on their first guess, then the password with the second highest frequency, and so on. Now, If the attacker is targeting a specific password and knows some information about that password, then they can filter the guess list and potentially speed up the attack. Say that the attacker knows the target password has a zxcvbn score of 3 (zxcvbn is a popular password strength meter); then the attacker can filter out any passwords that do not have a zxcvbn score of 3.

We simulated the attack described using a dataset of breach data, split into target passwords and the attacker’s guess list. For the zxcvbn measurement specifically, we found that the original zxcvbn score, which has a range of 0 to 4, gave too much information to the attacker — it would increase an attacker’s probability of guessing a user password by 20%. Thus we remapped the Zxcvbn score to ‘0’ (denoting weak passwords, having an original Zxcvbn score of 0) and ‘1’ (denoting medium to strong passwords, with a zxcvbn score of 1 or more), which leaked much less information and resulted in an attack speedup of less than 2%. We recommend doing a similar simulation for measuring the safety of logging other password-derived measurements that researchers or practitioners may want to record in the future. A full list of the measurements we logged and more details about the system can be found in our paper [1].

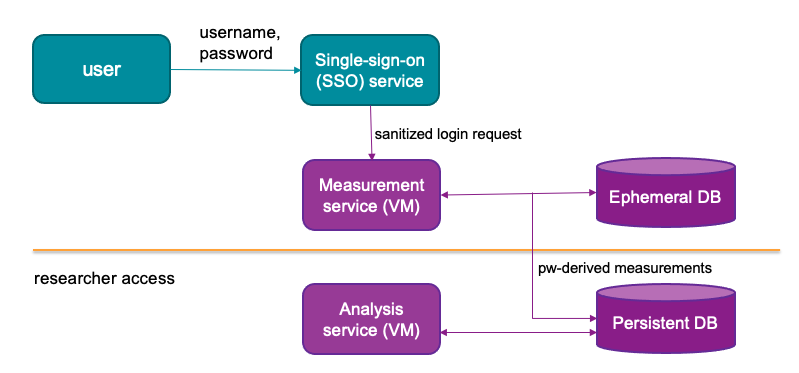

Once we settled on the set of measurements that would be useful to answer our research questions, while providing minimal benefit to an attacker in the unlikely case of compromising the measurement logs (bounded leakage), we went ahead and designed a system that would adhere to our other three design principles. This system, called Gossamer, receives a copy of the login request (with a subset of HTTP headers) from the main login server and performs a series of measurements.

Gossamer has two levels of storage — one ephemeral and one persistent — so that we can perform measurements across requests without storing sensitive data persistently. We wanted to know how similar or different are different passwords submitted by an IP address to a user account. Therefore, we need to access previously submitted passwords in plaintext. We solve this challenge by having two different storages: the ephemeral storage stores the passwords (encrypted under an in-memory key which is rotated every 24-hours) and persistent storage stores the anonymized logs of login requests with certain statistics computed about the submitted passwords.

It also uses different levels of privilege for different parts of the system. For example, the service that receives the login request and computes the statistics over raw passwords have a higher privilege level than the analysis service used to interact with the anonymized data stored in the persistent database. In theory, the ephemeral database could sit in the actual login server, although we will have to put more thought into how to do this with distributed login systems.

We deployed Gossamer in collaboration with IT security offices in two universities. At University 1 (U1), Gossamer was deployed for 7 months, and at University 2 (U2) it was deployed for 3 months. At U2 we received 24 million requests within 3 months, which was sufficient for answering our initial research questions. However, at U1 we only received 3.9 million requests in 3 months, as we were monitoring a subset of login traffic. We therefore continued monitoring at U1 until a very large, distributed attack bottlenecked all the login servers to the point that IT engineers had to remove any extraneous services. By that time we had collected 10 million login requests from U1.

With the data collected over these time periods, we were able to report statistics on user login behavior, especially regarding passwords. For example, we find that 30% of all failed requests at U1 were a typo of the actual password. We also found that 3% of submitted passwords at U1 appeared in a breach, and 6% of valid usernames also appeared in a breach. A full set of statistics and our findings can be found in the paper [3]; but to summarize, we found that breached credential use is still a serious problem, password-based login systems have usability issues, and two-factor authentication adds significant friction to login. The password-derived measurements were very helpful in coming to these conclusions, and we hope that this study will motivate the use of them for developing new login policies and countermeasure designs.

We have open sourced Gossamer, which can easily be augmented with new password-derived measurements. Gossamer can help system admins to securely measure statistics about the submitted passwords and create informed policy decisions. Our findings have strong implications for the utility of password-derived measurements, which we hope will be incorporated in future defense mechanisms.